A partner is preparing for a client meeting and sends a prompt to the firm’s AI assistant.

“Summarize emerging data governance risks for accounting firms in North America.”

The system returns information from the firm’s Microsoft 365 environment and produces a response within seconds. It combines regulatory visibility, internal research, and analysis from prior engagements.

Later, the team notices a red flag. Several insights in the AI generated summary reflect analysis drawn from confidential materials that were prepared for another client engagement.

If this happened inside your firm today, could you prove that confidential client information did not influence the AI assistant’s answer?

Since no files were shared and no permissions were bypassed, the assistant generated its response based on its level of access to the firm’s proprietary data.

The answer was helpful, but the source of the insight should have been blocked due to its confidential nature.

For accounting firms, the secure content distinction matters. Regulatory standards require firms to protect client information regardless of how it’s accessed. When AI generates insights content without clear engagement boundaries, organizations may struggle to demonstrate defensible audit history for secure client information.

When AI learns from the wrong engagement

Accounting firms manage thousands of engagements each year across audit, advisory, tax, and other practices. Each engagement generates documents, conversations, email, and research – much of it stored across Microsoft 365.

When a professional asks AI to analyze sector developments or summarize regulatory trends, the LLM evaluates all information available to it. If engagement context is not clearly defined with metadata and governance policies, the AI can’t distinguish between broadly accessible knowledge and confidential client engagement data. This can lead to responses influenced by information that should have remained restricted.

When a firm unknowingly uses that information in its deliverables, the firm’s credibility and client trust are put at risk. Ask this question of your organization:

Is our AI operating within clearly defined engagement boundaries? Or is it drawing from everything it can access across our Microsoft 365 environment?

Building on Microsoft 365 to support AI safely

Most firms have some form of governance in their environments. The challenge is how those controls extend to keep pace with the way AI now generates and synthesizes information.

Generative AI behaves the way we instruct it to. Instead of retrieving a specific file, it analyzes collections of information to generate a response. Because it doesn’t have hands to organize information, it relies on patterns of structured metadata and access control to determine which information it can process for analysis.

When the structure, metadata, access, and governance controls are inconsistent, engagement information is subject to negative exposure. Using automation to curate engagement context for AI introduces unlimited ways of capturing firm knowledge to increase fee earner efficiency and optimize proprietary knowledge that is now available in the world of AI.

The governance question has shifted from applying document security to enforcing knowledge boundaries. The age of controlling who can open a file is over. We must now control “What” can open a file, as well. To harness this level of control, automated engines are needed to implement governance that protects firms from what AI has access to in their environments. Otherwise, it will consume any information it can locate and access.

In a recent webinar discussion, technology and risk leaders from professional services firms described how these issues are already emerging in firm systems like Microsoft 365. Several possibilities involve AI responses influenced by material stored across multiple engagement workspaces, revealing governance gaps firms had not previously considered.

These scenarios and the controls firms are implementing are explored in more detail in the webinar recording.

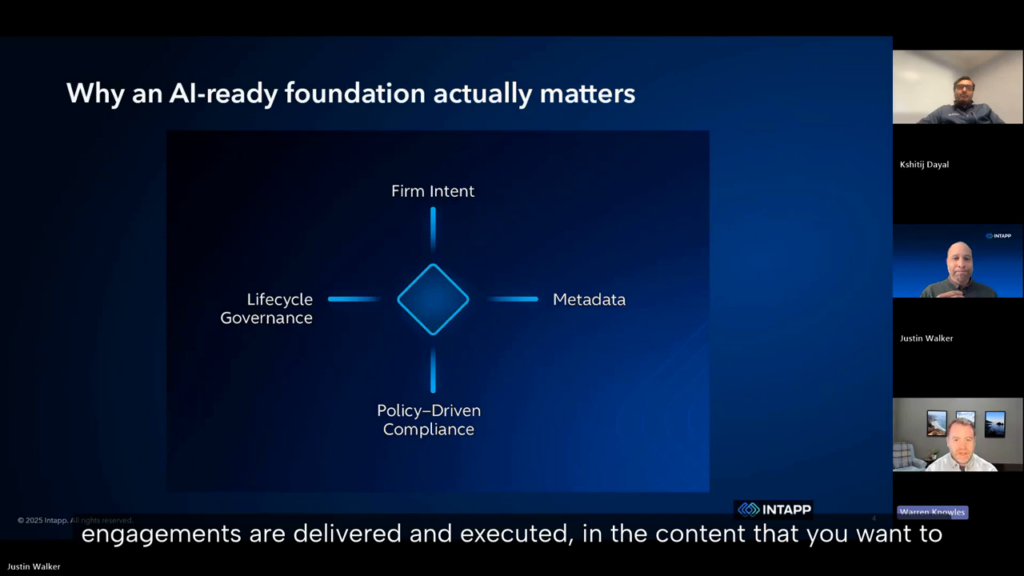

Metadata defines the boundaries AI must respect

Metadata defines the boundaries that AI must respect. It determines which information belongs to a specific client engagement and which material is publicly available for analysis.

When those boundaries are unclear, AI will generate insights influenced by content that should have remained restricted. Even if the professional using the output is unaware of its source, the firm remains accountable.

That uncertainty creates a governance challenge. If the firm can’t demonstrate that client information is securely controlled, the firm may struggle to prove confidentiality obligations were enforced.

In an AI-enabled environment, metadata and access controls become the foundation for protecting client information at scale.

Lifecycle governance prevents long-term exposure

The governance challenge rarely begins with AI itself. It often begins with collaboration environments that outlive the engagements they were created to support.

Workspaces frequently remain active after engagements close. Team membership changes and permissions expand while documents accumulate outside the original context.

When AI analyzes that environment it inherits those historical decisions. A workspace containing years of client content becomes a broad data source for AI inference.

Effective AI governance in accounting therefore depends on lifecycle governance that manages how collaboration environments are created, maintained, and retired across the engagement lifecycle.

Why this is becoming a leadership issue

Many discussions about AI focus on productivity gains. Firm leaders face a more fundamental question.

Can the firm scale AI use across systems like Microsoft 365 while protecting client confidentiality and maintaining regulatory compliance?

Firms that can demonstrate those controls will expand AI use more confidently because their governance framework supports it.

Those that cannot may slow adoption, increasing operational risk and limiting the value they can extract from their technology investments.

The gap is not about access to AI – it’s about the ability to govern it.

How firms are addressing AI governance

The shift toward AI-enabled collaboration is forcing accounting firms to reconsider how data governance works in practice.

In the recording, technology and AI leaders discuss how firms are identifying governance gaps exposed by AI and how structured metadata with policy-driven governance controls help ensure confidential client information remains protected.

The discussion also explores the way lifecycle governance in collaboration environments aligns with confidentiality and compliance requirements for across the engagement lifecycle.

Watch the webinar to see how firms are enabling AI in accounting environments while protecting client trust.